For a little over a year now I've been using Docker to help me manage my local demo environment. It has also helped me in my workshops by making it easy for any participant to get our platform up and running regardless of what laptop they brought with them. I first shared this in a blog post last December entitled, Docker Compose for the Sonatype Platform - Part 1. Then in February I added an NGINX server to the mix to offload SSL and at the same time added some basic provisioning examples in Running The Nexus Platform Behind NGINX Using Docker. Last July, Docker Desktop brought support for a certified Kubernetes implementation to the stable channel! This was a big deal for me because it meant I no longer had to try and manage a separate installation of mini-kube, it was now all a part of the package.

I found this blog with a Tutorial for getting started that help me get a Kubernetes dashboard installed and confirmed that everything was working for me. Very exciting, now all I had to do was figure out how to move my reference platform into Kubernetes. Turns out the team at Docker were one step ahead of me, they had been positioning docker-compose, v3, files just for this purpose along with a new tool, Docker Stack. A few keyword offerings to the Google help me find this blog post: Docker Compose and Kubernetes With Docker For Desktop.

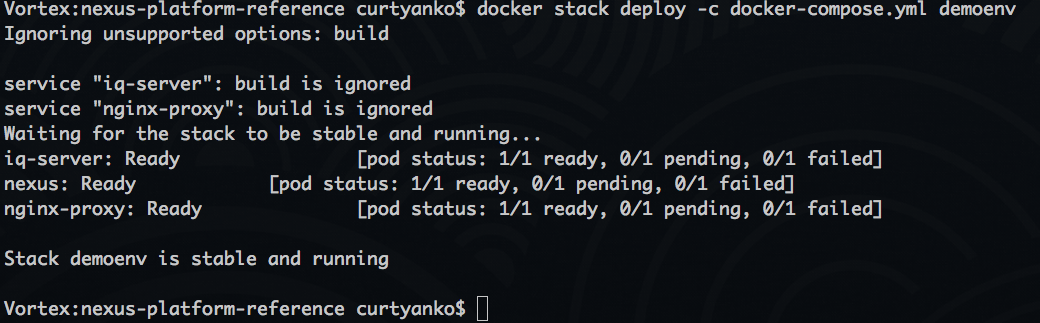

Holy cow, you mean all I have to do is run a docker stack command and the whole reference platform will be running in my local Kubernetes instance? To the terminal!

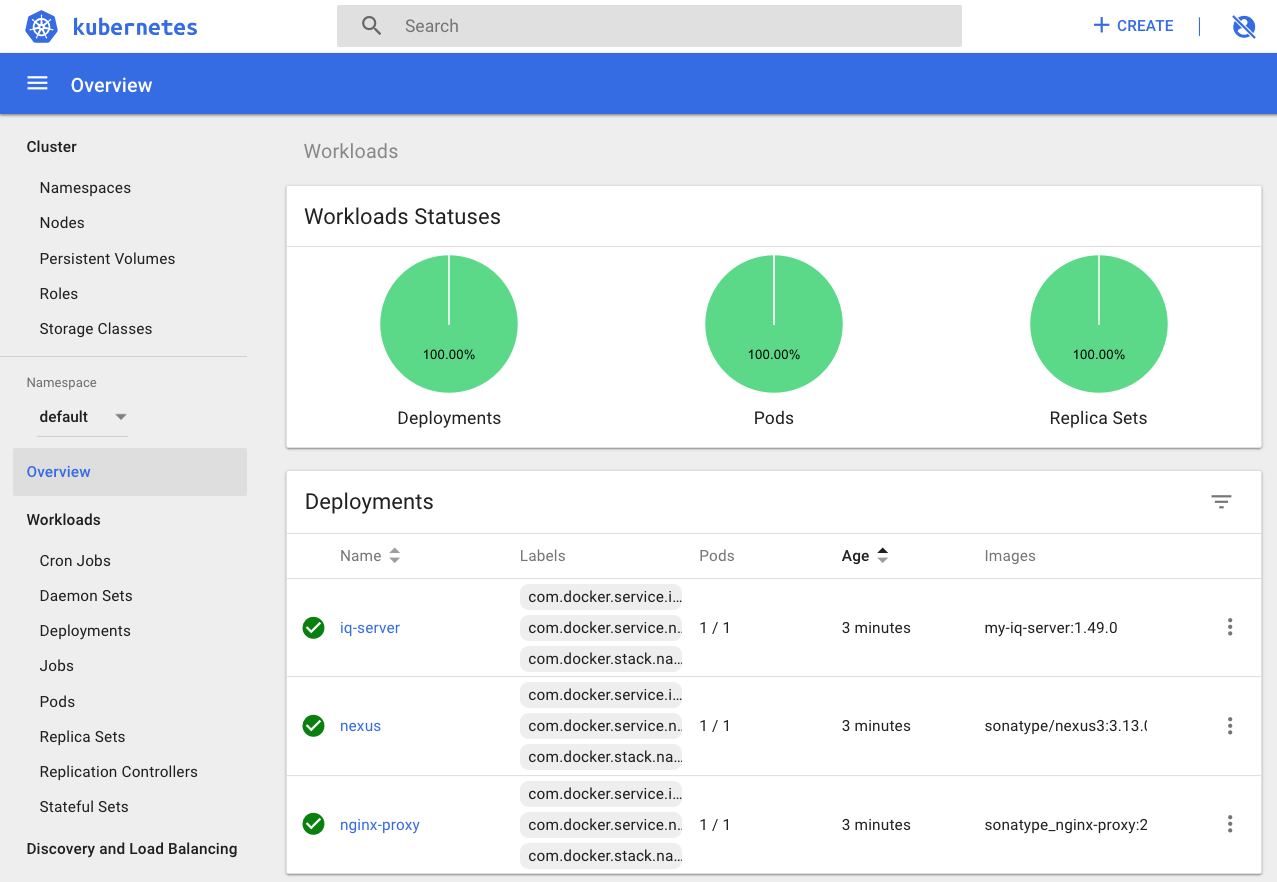

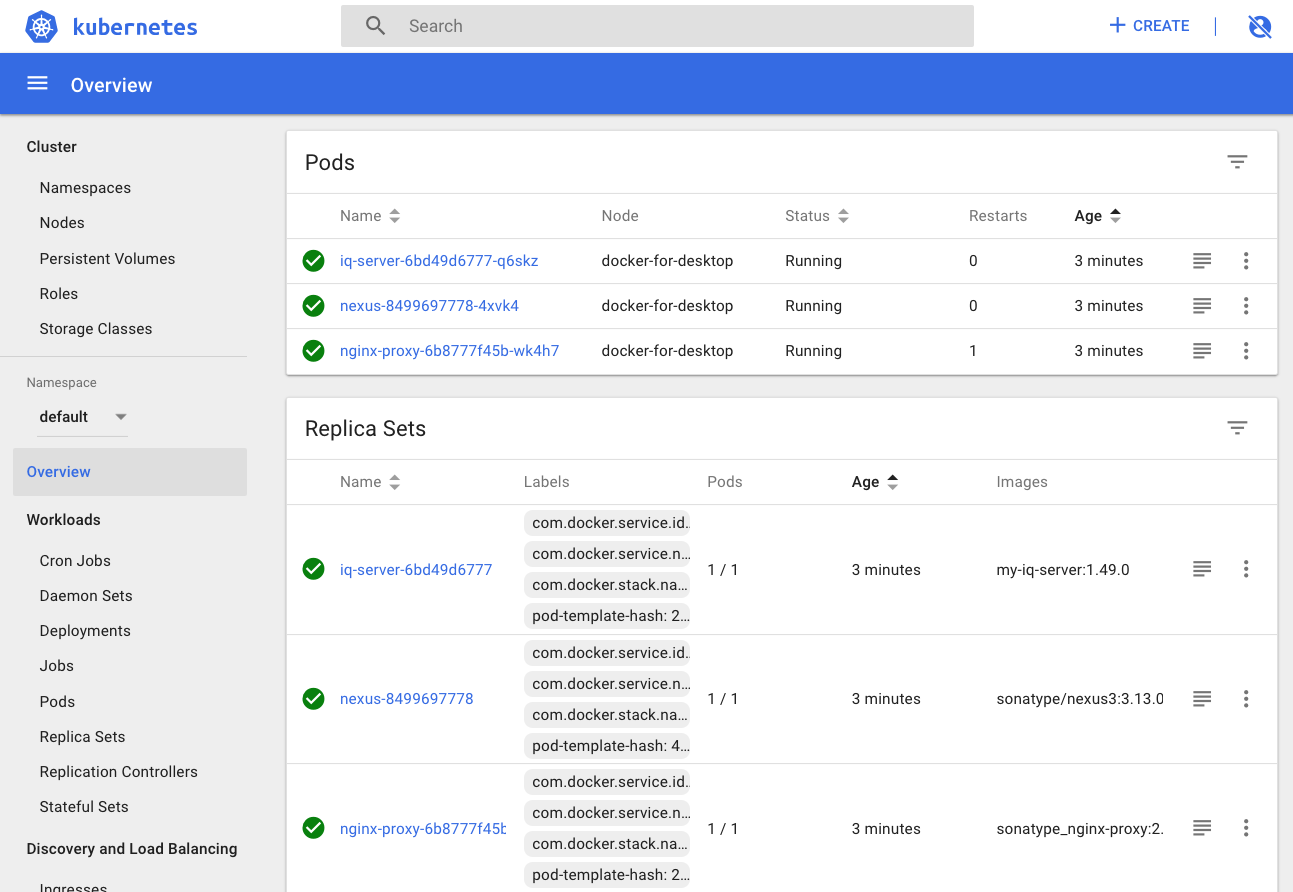

Logging into the dashboard we can see all the elements created by the stack.

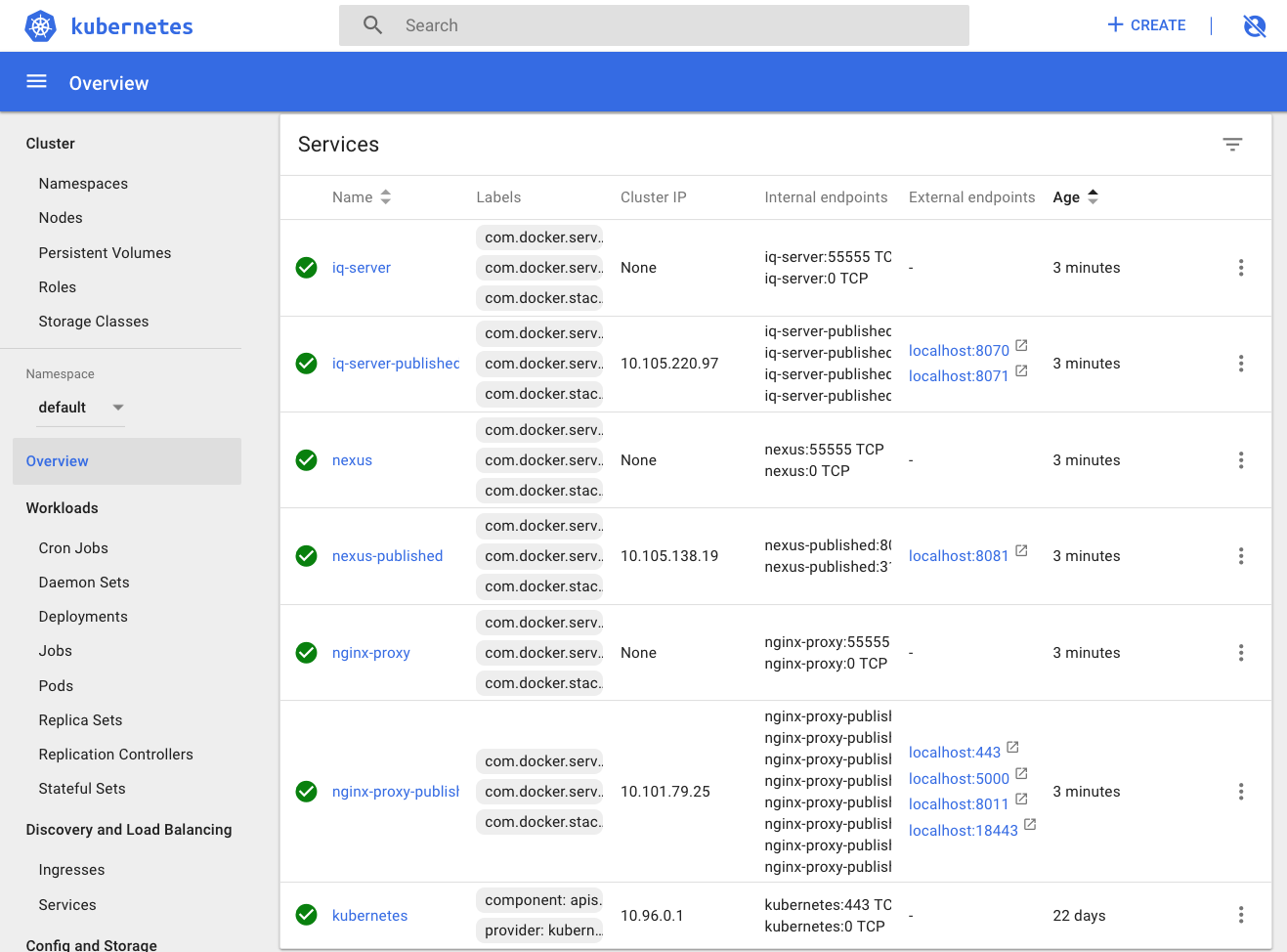

When we look at the Services we see an interesting pattern. Docker stack has created two services for each Pod, one for external routes, the ones with 'publisher' in the name, and another for internal, pod-to-pod communications.

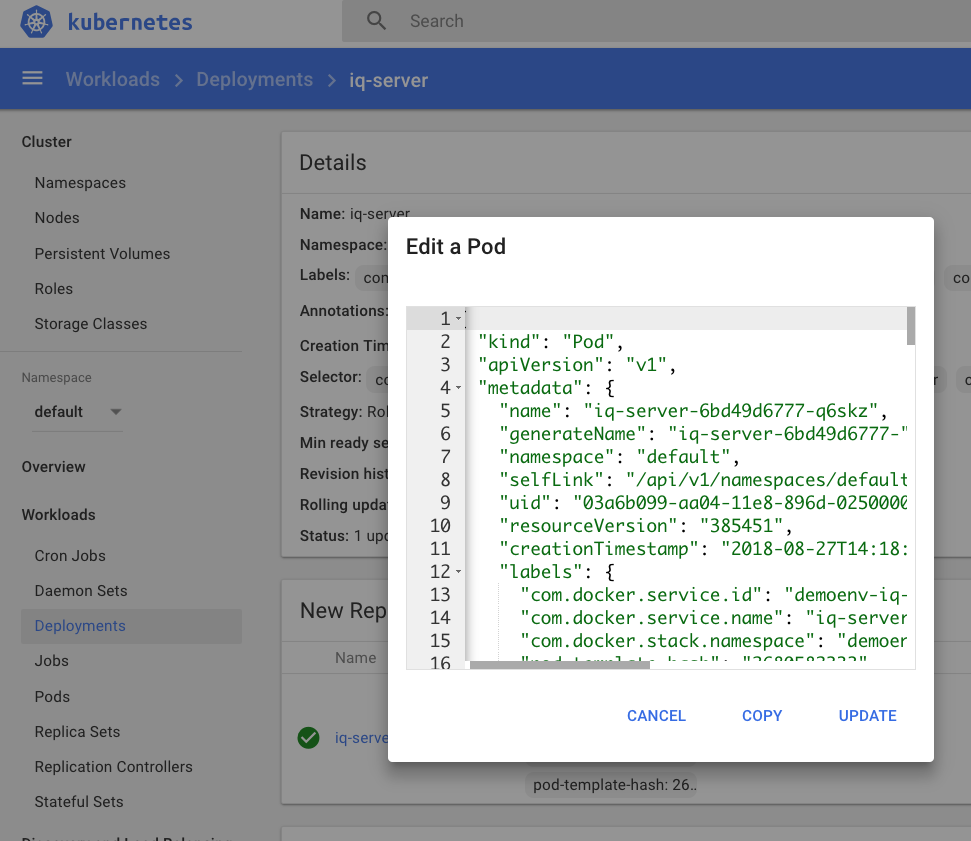

Wow! Those 30 lines of the docker-compose file are sure a lot easier than creating a half dozen, or so, YML files for Kubernetes. Which we can easily see by looking in the dashboard.

If I scroll down there are ~140 lines in the definition of just that one pod. But now we have a working example along with example of annotations.

Docker stack does ignore the 'build' directive in docker-compose files so you might have to run a docker-compose build prior to running docker stack if you haven't already build the the two custom images I use.

To stop the whole thing just use docker stack rm demoenv. This will stop all of the services and remove the pods and deployments completely removing the whole stack from my Kubernetes environment. Now that's easy.

I'm amazed hot how seamlessly everything moved over including binding exposed ports to localhost as an endpoint. This meant that everything worked for me exactly as it had before with my etc/hosts entries allowing me to hit the NGINX instance and get routed to the right pod.

There is still plenty of work to do, like changing the local persistent volume to one declared in Kubernetes, but this is a huge step forward by the Docker team to better align the ways developers work to how it will be done in operation. I look forward to seeing how Docker Stacks continue to evolve. In the next blog we'll take a look at a shortcut to creating the Kubernetes YML files from the docker-compose file without using docker stack.

Curtis Yanko is a Sr Principal Architect at Sonatype and a DevOps coach/evangelist. Prior to coming to Sonatype Curtis started the DevOps Center of Enablement at a Fortune 100 insurance company and chaired a Open Source Governance Committee. When he isn’t working with customers and partners on how to build security and governance into modern CI/CD pipelines he can be found raising service dogs or out playing ultimate frisbee during his lunch hour. Curtis is currently working on building strategic technical partnerships to help solve for the rugged devops tool chain.

Explore All Posts by Curtis YankoTags

Try Nexus Repository Free Today

Sonatype Nexus Repository is the world’s most trusted artifact repository manager. Experience the difference and download Community Edition for free.